An MPGuino Gasoline-Financial system Pc with a Retro Look

[ad_1]

The world of magic had Houdini, who pioneered methods which might be nonetheless carried out at this time. And information compression has Jacob Ziv.

In 1977, Ziv, working with Abraham Lempel, printed the equal of

Houdini on Magic: a paper within the IEEE Transactions on Info Concept titled “A Common Algorithm for Sequential Information Compression.” The algorithm described within the paper got here to be referred to as LZ77—from the authors’ names, in alphabetical order, and the yr. LZ77 wasn’t the primary lossless compression algorithm, but it surely was the primary that would work its magic in a single step.

The next yr, the 2 researchers issued a refinement, LZ78. That algorithm grew to become the idea for the Unix compress program used within the early ’80s; WinZip and Gzip, born within the early ’90s; and the GIF and TIFF picture codecs. With out these algorithms, we might seemingly be mailing giant information recordsdata on discs as an alternative of sending them throughout the Web with a click on, shopping for our music on CDs as an alternative of streaming it, and Fb feeds that do not have bouncing animated photographs.

Ziv went on to accomplice with different researchers on different improvements in compression. It’s his full physique of labor, spanning greater than half a century, that earned him the

2021 IEEE Medal of Honor “for elementary contributions to info concept and information compression know-how, and for distinguished analysis management.”

Ziv was born in 1931 to Russian immigrants in Tiberias, a metropolis then in British-ruled Palestine and now a part of Israel. Electrical energy and devices—and little else—fascinated him as a baby. Whereas training violin, for instance, he got here up with a scheme to show his music stand right into a lamp. He additionally tried to construct a Marconi transmitter from steel player-piano elements. When he plugged the contraption in, the complete home went darkish. He by no means did get that transmitter to work.

When the Arab-Israeli Conflict started in 1948, Ziv was in highschool. Drafted into the Israel Protection Forces, he served briefly on the entrance traces till a gaggle of moms held organized protests, demanding that the youngest troopers be despatched elsewhere. Ziv’s reassignment took him to the Israeli Air Pressure, the place he skilled as a radar technician. When the warfare ended, he entered Technion—Israel Institute of Expertise to review electrical engineering.

After finishing his grasp’s diploma in 1955, Ziv returned to the protection world, this time becoming a member of Israel’s Nationwide Protection Analysis Laboratory (now

Rafael Superior Protection Programs) to develop digital elements to be used in missiles and different navy techniques. The difficulty was, Ziv remembers, that not one of the engineers within the group, together with himself, had greater than a fundamental understanding of electronics. Their electrical engineering schooling had targeted extra on energy techniques.

“We had about six individuals, and we needed to train ourselves,” he says. “We’d choose a ebook after which examine collectively, like non secular Jews finding out the Hebrew Bible. It wasn’t sufficient.”

The group’s purpose was to construct a telemetry system utilizing transistors as an alternative of vacuum tubes. They wanted not solely data, however elements. Ziv contacted Bell Phone Laboratories and requested a free pattern of its transistor; the corporate despatched 100.

“That lined our wants for a couple of months,” he says. “I give myself credit score for being the primary one in Israel to do one thing critical with the transistor.”

In 1959, Ziv was chosen as one in all a handful of researchers from Israel’s protection lab to review overseas. That program, he says, reworked the evolution of science in Israel. Its organizers did not steer the chosen younger engineers and scientists into specific fields. As an alternative, they allow them to pursue any sort of graduate research in any Western nation.

“So as to run a pc program on the time, you had to make use of punch playing cards and I hated them. That’s the reason I did not go into actual pc science.”

Ziv deliberate to proceed working in communications, however he was now not all in favour of simply the {hardware}. He had not too long ago learn

Info Concept (Prentice-Corridor, 1953), one of many earliest books on the topic, by Stanford Goldman, and he determined to make info concept his focus. And the place else would one examine info concept however MIT, the place Claude Shannon, the sphere’s pioneer, had began out?

Ziv arrived in Cambridge, Mass., in 1960. His Ph.D. analysis concerned a technique of figuring out encode and decode messages despatched by way of a loud channel, minimizing the chance and error whereas on the identical time maintaining the decoding easy.

“Info concept is gorgeous,” he says. “It tells you what’s the finest that you may ever obtain, and [it] tells you approximate the result. So in the event you make investments the computational effort, you’ll be able to know you’re approaching one of the best end result potential.”

Ziv contrasts that certainty with the uncertainty of a deep-learning algorithm. It might be clear that the algorithm is working, however no person actually is aware of whether or not it’s the finest end result potential.

Whereas at MIT, Ziv held a part-time job at U.S. protection contractor

Melpar, the place he labored on error-correcting software program. He discovered this work much less stunning. “So as to run a pc program on the time, you had to make use of punch playing cards,” he remembers. “And I hated them. That’s the reason I did not go into actual pc science.”

Again on the Protection Analysis Laboratory after two years in america, Ziv took cost of the Communications Division. Then in 1970, with a number of different coworkers, he joined the school of Technion.

There he met Abraham Lempel. The 2 mentioned making an attempt to enhance lossless information compression.

The cutting-edge in lossless information compression on the time was Huffman coding. This method begins by discovering sequences of bits in an information file after which sorting them by the frequency with which they seem. Then the encoder builds a dictionary through which the commonest sequences are represented by the smallest variety of bits. This is similar concept behind Morse code: Probably the most frequent letter within the English language, e, is represented by a single dot, whereas rarer letters have extra complicated mixtures of dots and dashes.

Huffman coding, whereas nonetheless used at this time within the MPEG-2 compression format and a lossless type of JPEG, has its drawbacks. It requires two passes by way of an information file: one to calculate the statistical options of the file, and the second to encode the information. And storing the dictionary together with the encoded information provides to the scale of the compressed file.

Ziv and Lempel questioned if they may develop a lossless data-compression algorithm that will work on any form of information, didn’t require preprocessing, and would obtain one of the best compression for that information, a goal outlined by one thing often known as the Shannon entropy. It was unclear if their purpose was even potential. They determined to search out out.

Ziv says he and Lempel have been the “good match” to sort out this query. “I knew all about info concept and statistics, and Abraham was effectively outfitted in Boolean algebra and pc science.”

The 2 got here up with the thought of getting the algorithm search for distinctive sequences of bits on the identical time that it is compressing the information, utilizing tips that could seek advice from beforehand seen sequences. This method requires just one go by way of the file, so it is sooner than Huffman coding.

Ziv explains it this fashion: “You have a look at incoming bits to search out the longest stretch of bits for which there’s a match previously. As an instance that first incoming bit is a 1. Now, since you might have just one bit, you might have by no means seen it previously, so you don’t have any selection however to transmit it as is.”

“However then you definitely get one other bit,” he continues. “Say that is a 1 as effectively. So that you enter into your dictionary 1-1. Say the following bit is a 0. So in your dictionary you now have 1-1 and likewise 1-0.”

This is the place the pointer is available in. The following time that the stream of bits features a 1-1 or a 1-0, the software program would not transmit these bits. As an alternative it sends a pointer to the situation the place that sequence first appeared, together with the size of the matched sequence. The variety of bits that you just want for that pointer could be very small.

“Info concept is gorgeous. It tells you what’s the finest that you may ever obtain, and (it) tells you approximate the result.”

“It is principally what they used to do in publishing

TV Information,” Ziv says. “They’d run a synopsis of every program as soon as. If this system appeared greater than as soon as, they did not republish the synopsis. They simply mentioned, return to web page x.”

Decoding on this approach is even easier, as a result of the decoder would not must establish distinctive sequences. As an alternative it finds the places of the sequences by following the pointers after which replaces every pointer with a replica of the related sequence.

The algorithm did every thing Ziv and Lempel had got down to do—it proved that universally optimum lossless compression with out preprocessing was potential.

“On the time they printed their work, the truth that the algorithm was crisp and stylish and was simply implementable with low computational complexity was virtually inappropriate,” says Tsachy Weissman, {an electrical} engineering professor at Stanford College who focuses on info concept. “It was extra in regards to the theoretical end result.”

Finally, although, researchers acknowledged the algorithm’s sensible implications, Weissman says. “The algorithm itself grew to become actually helpful when our applied sciences began coping with bigger file sizes past 100,000 and even one million characters.”

“Their story is a narrative in regards to the energy of elementary theoretical analysis,” Weissman provides. “You’ll be able to set up theoretical outcomes about what needs to be achievable—and many years later humanity advantages from the implementation of algorithms primarily based on these outcomes.”

Ziv and Lempel stored engaged on the know-how, making an attempt to get nearer to entropy for small information recordsdata. That work led to LZ78. Ziv says LZ78 appears much like LZ77 however is definitely very completely different, as a result of it anticipates the following bit. “As an instance the primary bit is a 1, so that you enter within the dictionary two codes, 1-1 and 1-0,” he explains. You’ll be able to think about these two sequences as the primary branches of a tree.”

“When the second bit comes,” Ziv says, “if it is a 1, you ship the pointer to the primary code, the 1-1, and if it is 0, you level to the opposite code, 1-0. And then you definitely prolong the dictionary by including two extra prospects to the chosen department of the tree. As you do this repeatedly, sequences that seem extra incessantly will develop longer branches.”

“It seems,” he says, “that not solely was that the optimum [approach], however so easy that it grew to become helpful immediately.”

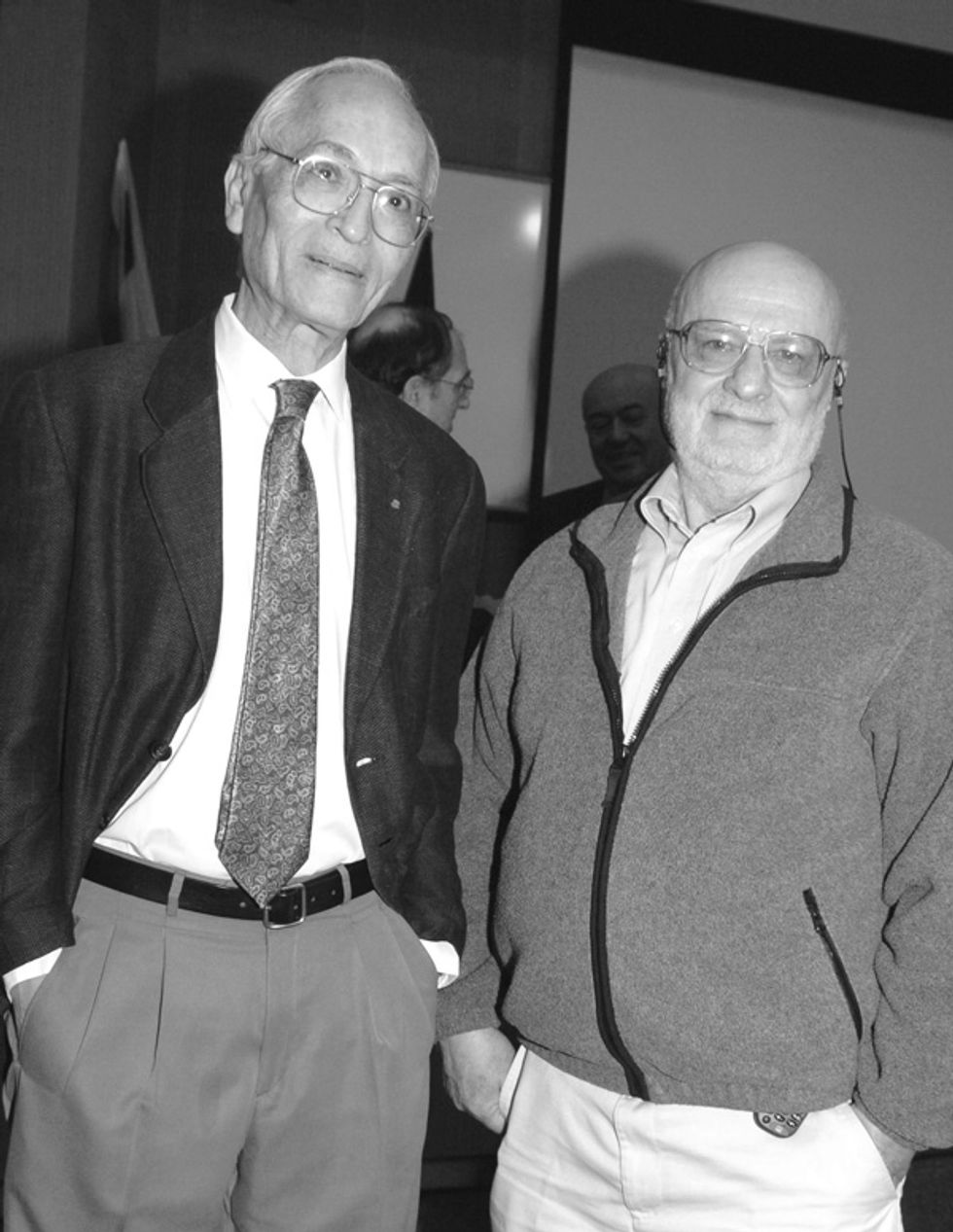

Jacob Ziv (left) and Abraham Lempel printed algorithms for lossless information compression in 1977 and 1978, each within the IEEE Transactions on Info Concept. The strategies grew to become often known as LZ77 and LZ78 and are nonetheless in use at this time.Photograph: Jacob Ziv/Technion

Jacob Ziv (left) and Abraham Lempel printed algorithms for lossless information compression in 1977 and 1978, each within the IEEE Transactions on Info Concept. The strategies grew to become often known as LZ77 and LZ78 and are nonetheless in use at this time.Photograph: Jacob Ziv/Technion

Whereas Ziv and Lempel have been engaged on LZ78, they have been each on sabbatical from Technion and dealing at U.S. corporations. They knew their growth could be commercially helpful, they usually needed to patent it.

“I used to be at Bell Labs,” Ziv remembers, “and so I believed the patent ought to belong to them. However they mentioned that it is not potential to get a patent except it is a piece of {hardware}, they usually weren’t all in favour of making an attempt.” (The U.S. Supreme Courtroom did not open the door to direct patent safety for software program till the Nineteen Eighties.)

Nevertheless, Lempel’s employer, Sperry Rand Corp., was keen to strive. It received across the restriction on software program patents by constructing {hardware} that applied the algorithm and patenting that machine. Sperry Rand adopted that first patent with a model tailored by researcher Terry Welch, referred to as the LZW algorithm. It was the LZW variant that unfold most generally.

Ziv regrets not with the ability to patent LZ78 straight, however, he says, “We loved the truth that [LZW] was extremely popular. It made us well-known, and we additionally loved the analysis it led us to.”

One idea that adopted got here to be referred to as Lempel-Ziv complexity, a measure of the variety of distinctive substrings contained in a sequence of bits. The less distinctive substrings, the extra a sequence may be compressed.

This measure later got here for use to examine the safety of encryption codes; if a code is really random, it can’t be compressed. Lempel-Ziv complexity has additionally been used to investigate electroencephalograms—recordings {of electrical} exercise within the mind—to

decide the depth of anesthesia, to diagnose melancholy, and for different functions. Researchers have even utilized it to analyze pop lyrics, to find out developments in repetitiveness.

Over his profession, Ziv printed some 100 peer-reviewed papers. Whereas the 1977 and 1978 papers are essentially the most well-known, info theorists that got here after Ziv have their very own favorites.

For Shlomo Shamai, a distinguished professor at Technion, it is the 1976 paper that launched

the Wyner-Ziv algorithm, a approach of characterizing the bounds of utilizing supplementary info obtainable to the decoder however not the encoder. That drawback emerges, for instance, in video purposes that reap the benefits of the truth that the decoder has already deciphered the earlier body and thus it may be used as facet info for encoding the following one.

For Vincent Poor, a professor {of electrical} engineering at Princeton College, it is the 1969 paper describing

the Ziv-Zakai sure, a approach of understanding whether or not or not a sign processor is getting essentially the most correct info potential from a given sign.

Ziv additionally impressed quite a lot of main data-compression consultants by way of the lessons he taught at Technion till 1985. Weissman, a former scholar, says Ziv “is deeply passionate in regards to the mathematical fantastic thing about compression as a method to quantify info. Taking a course from him in 1999 had a giant half in setting me on the trail of my very own analysis.”

He wasn’t the one one so impressed. “I took a category on info concept from Ziv in 1979, initially of my grasp’s research,” says Shamai. “Greater than 40 years have handed, and I nonetheless bear in mind the course. It made me keen to have a look at these issues, to do analysis, and to pursue a Ph.D.”

Lately, glaucoma has taken away most of Ziv’s imaginative and prescient. He says {that a} paper printed in IEEE Transactions on Info Concept this January is his final. He’s 89.

“I began the paper two and a half years in the past, once I nonetheless had sufficient imaginative and prescient to make use of a pc,” he says. “On the finish, Yuval Cassuto, a youthful school member at Technion, completed the challenge.” The paper discusses conditions through which giant info recordsdata have to be transmitted rapidly to distant databases.

As Ziv explains it, such a necessity might come up when a physician desires to check a affected person’s DNA pattern to previous samples from the identical affected person, to find out if there was a mutation, or to a library of DNA, to find out if the affected person has a genetic illness. Or a researcher finding out a brand new virus might need to evaluate its DNA sequence to a DNA database of recognized viruses.

“The issue is that the quantity of data in a DNA pattern is big,” Ziv says, “an excessive amount of to be despatched by a community at this time in a matter of hours and even, typically, in days. If you’re, say, making an attempt to establish viruses which might be altering in a short time in time, that could be too lengthy.”

The method he and Cassuto describe entails utilizing recognized sequences that seem generally within the database to assist compress the brand new information, with out first checking for a particular match between the brand new information and the recognized sequences.

“I actually hope that this analysis may be used sooner or later,” Ziv says. If his observe file is any indication, Cassuto-Ziv—or maybe CZ21—will add to his legacy.

This text seems within the Could 2021 print difficulty as “Conjurer of Compression.”

Associated Articles Across the Net

[ad_2]

No Comment! Be the first one.